Over the last decade, artificial intelligence has undergone a massive transformation. Innovation has moved away from determined rule engines, chatbots, and machine learning models into a new era of generative intelligence, enabling systems that are capable of reasoning across various domains and producing human-like responses.

At the center of this transformation lies the Large Language Models (LLMs). LLMs aren’t just an upgrade to Natural Language Processing (NLP), it’s a paradigm shift in how machines understand, generate, and interact with human language. From powering conversational agents to coding copilots, knowledge retrieval, and agentic AI systems, LLMs have become the foundational layer of modern artificial intelligence.

This blog provides a comprehensive explanation of what LLM models are, how they work, and what actually differentiates them from traditional AI systems.

Large Language Models (LLMs) Explained

A large language model is a deep learning model trained on massive volumes of textual data to understand, predict, and generate language learning based on probability patterns. By adapting massive datasets and billions of parameters, LLMs have transformed the way humans interact with technology. LLMs are trained on data that has been gathered from the internet, and crawlers continue to crawl the web for more in-depth research content.

LLMs particularly use a type of machine learning called deep learning in order to understand how words, characters, and sentences work together. Deep learning involves probabilistic modeling of unstructured data to learn language patterns at scale. Model performance is further improved through fine-tuning or prompt engineering, depending on the use case.

Modern LLMs include ChatGPT, Google Gemini, and Claude.

What are Large Language Models Used For?

Below are the most prominent relevant use cases of LLMs.

01. Conversational AI and intelligent agents

Conversational intelligence is one of the most common applications of LLMs. Assistants powered by LLMs are capable of multi-turn contextual reasoning, semantic memory retention, and dynamic response generation. These systems are therefore used in enterprise customer support platforms, internal IT helpdesks, AI copilots for SaaS products, and voice-enabled agents integrated with backend systems.

02. Code generation and automation

In an engineering environment, LLMs are used for code generation in multiple programming languages, bug detection, and testing. These capabilities are embedded within IDE copilots, CI/CD pipelines, and low-code or no-code platforms. By modelling programming languages into structured systems, LLMs significantly reduce cognitive load for developers and deliver without compromising quality.

03. Contextual intelligence

LLMs are widely used for content generation. Whether it’s technical documentation and whitepapers, marketing and brand narrative generation, legal and policy drafting, or financial summaries, LLM adapts tone, structure, and depth dynamically, enabling context-sensitive content generation aligned with brand voice and domain constraints.

04. Automation of business workflows

LLMs play a crucial role in intelligent process automation. When LLMs are integrated with robotic process automation, workflow orchestration tools, and agentic AI frameworks, they deliver contextual decisions, trigger downstream actions, and coordinate across multiple tools & systems.

How Do Large Language Models Work?

To understand how LLMs work, it’s equally important to know the technological foundations they are built on. Here is an in depth explanation of the working.

1. Machine learning: Teaching systems through data

If we talk at the most fundamental level, LLMs are powered by machine learning, a branch of artificial intelligence focused on learning patterns from data rather than relying on programmed rules.

Machine learning systems are exposed to enormous volumes of text and allowed to identify recurring patterns, detect relationships between symbols, and learn statistical regularities on their own.

2. Deep learning: Learning through probability

Deep learning is a more advanced form of machine learning designed to handle complex, high-dimensional data like language. These models learn by observing how patterns occur, estimating probabilities of what occurs next, and continuously refining predictions over time.

Over time, this enables LLMs to generate better answers to the queries and become exceptionally good at predicting language that behaves like humans.

3. Neural networks: Core of LLMs

To support the contextual level of learning, LLMs are built using artificial neural networks. A neural network consists of an input layer where data enters, multiple hidden layers where patterns are learned, and an output layer where all predictions are generated.

Each layer processes information and passes it forward when certain activation thresholds are met. As data moves through the networks, it thus transforms into meaningful representations.

4. Transformer models: Learning the deep context

Like Google, you get the results instantly, and that too personalised as per your query. This is because of transformer architectures. Language is inherently contextual, and the meaning of a word often depends entirely on the words around it, the sentence structure, and the broader conversation. Transformer models address this through a mechanism called self-attention.

Self-attention allows the model to examine all the words in a sentence at the same time, determine which words are most relevant to each other, and understand how meaning changes based on context. This capability enables LLMs to connect ideas across long passages, understand how sentences relate to each other, and recognize how different sentences influence each other.

That’s how a user gets a response to their query based on intent and contextual understanding. As transformer models are trained on massive datasets, they develop a strong ability to associate words, phrases, and concepts based on how they appear together across millions of examples.

The combination of machine learning foundations, deep learning, neural network architectures, and transformer-based contextual understanding allows LLMs to function as powerful language intelligence systems rather than simple text predictors. This is the reason why LLMs are capable of adapting to new tasks and topics.

Transform Your Business with Expert AI Integration

Unlock automation, improve efficiency, and accelerate growth with custom AI solutions built specifically for your business.

Retrieval-Augmented Generation (RAG) and Tool-Augmented LLMs

While large language models are trained on static datasets, real-world applications often require access to up-to-date or domain-specific information. Retrieval-Augmented Generation (RAG) addresses this by connecting LLMs to external knowledge sources at inference time, allowing relevant data to be retrieved and added into the prompt before generating a response. This grounding significantly improves accuracy and reduces hallucinations.

In addition, modern LLM systems are frequently enhanced with tool augmentation, enabling models to interact with external APIs, execute functions, or trigger workflows. Together, RAG and tool augmentation make LLM applications more reliable, context-aware, and suitable for production-grade enterprise use cases.

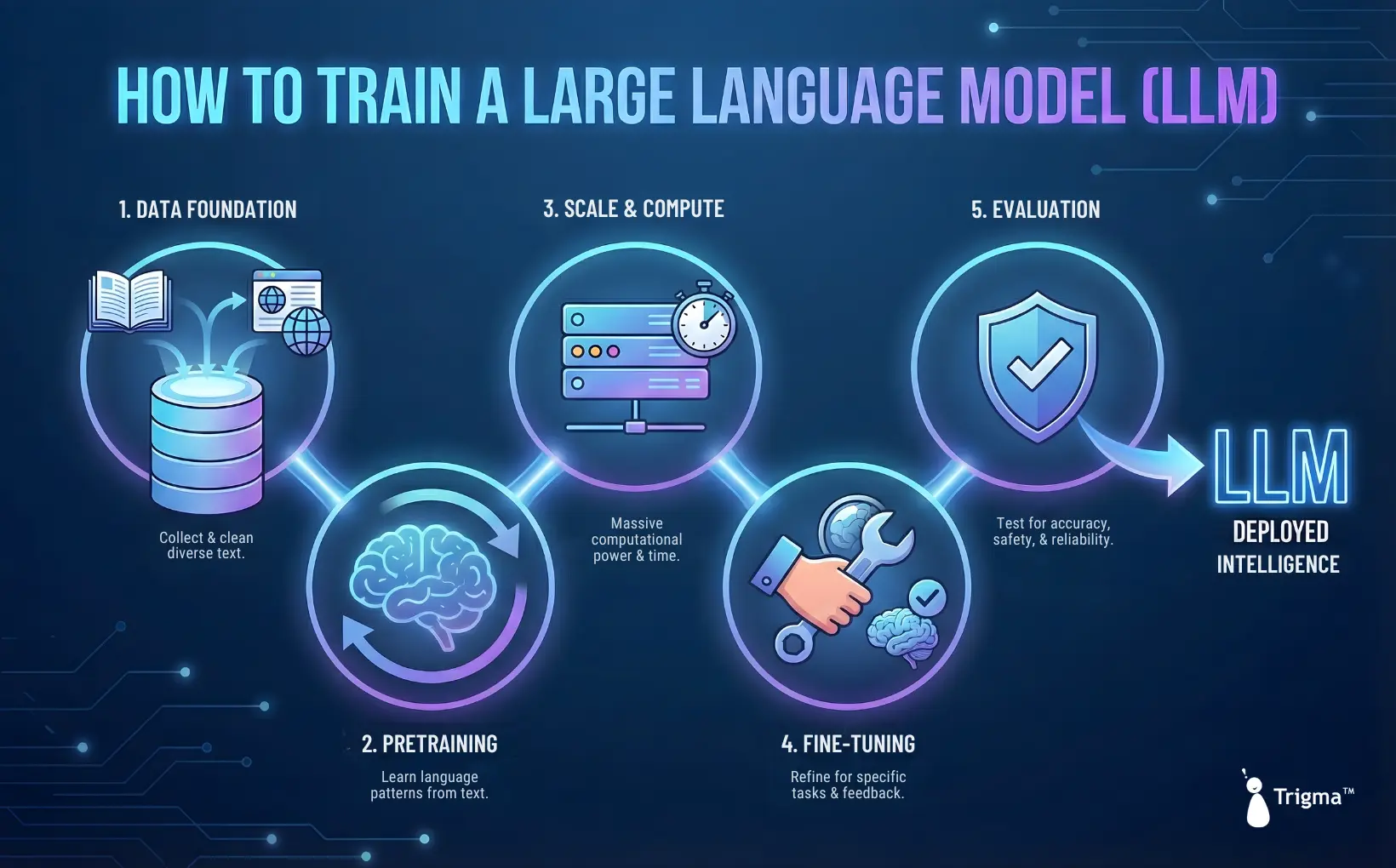

How to Train a Large Language Model (LLM)?

LLM learn language and behavior at scale and with proper interaction. LLMs are not programmed with rules; they are trained to recognize patterns in understanding context by exposure to maximum amounts of data. Here’s how we can train an LLM.

1. Data as the foundation

LLM training begins with large, diverse datasets drawn from various sources such as books, articles, technical documentation, code repositories, and public web content. The objective is to expose the model to many ways language is used in domain contexts and writing styles. Before training, data is cleaned and structured to reduce noise and improve learning efficiency.

2. Pretraining: How language works

The core learning phase is pretraining, where the model repeatedly predicts missing or next words in the text. Each incorrect prediction slightly adjusts the model’s internal parameters, thus gradually improving accuracy. With over billions of iterations, the model learns the language patterns but does not instruct or intend.

3. Scale and compute

Pretraining modern LLM requires enormous computational resources, often running thousands of GPUs running for weeks or months. This scale is not optional; many advanced capabilities emerge only when models reach sufficient size and data exposure.

4. Fine-tuning and alignment

Once the model is pretrained, it is refined through fine-tuning, where it is trained on smaller, curated datasets to improve instruction following, response clarity, and practical usefulness. This often includes human feedback where preferred responses are reinforced so the model outputs are most helpful.

5. Evaluation and ongoing improvement

Before deployment, LLMs are tested across benchmarks and real-world scenarios. Even after release, models are updated through fine-tuning and evaluation to improve performance, safety, and reliability.

Difference Between Large Language Models and Traditional AI Systems

Here’s a detailed explanation of how LLM and traditional AI systems differ from each other.

| Basis | Traditional AI systems | LLM |

|---|---|---|

| Core approach | Rule-based logic or narrowly trained machine learning models | Data-driven probabilistic models trained on massive data |

| Scope | Designed for specific & predefined tasks | General-purpose language intelligence across domains |

| Learning method | Requires explicit feature engineering and labeled data | Learns patterns through self-supervised deep learning |

| Handling language | Relies on keywords matching, intent classification, and templates | Understands context, semantics, and nuance through attention mechanisms |

| Context awareness | Limited or short-term context handling | Maintains rich contextual understanding across long inputs |

| Response generation | Predefined or template-based outputs | Dynamically generated and human-like responses |

| System behavior | Deterministic and predictable | Probabilistic and flexible |

| Integration style | Operates on isolated components | Functions as an intelligence layer across systems |

| Maintenance effort | High, rules, and models must be continuously updated | Lower, behavior evolves through training and alignment |

| Use cases | Rule engines, basic chatbots, form validation, expert systems | Conversational AI, copilots |

Summing Up

LLM models represent a massive shift in how artificial intelligence is built and applied. Rather than operating as task-specific tools or rule-based systems, LLMs function as general-purpose intelligence layers, capable of understanding language, reasoning across contexts, and generating meaningful outputs at scale.

Throughout this blog, we have learned about LLMs. By learning patterns from massive amounts of text, LLMs move beyond rigid automation and give results in a more adaptive and contextually aware decision. As organizations move towards AI native products and agentic systems, LLMs are the backbone of intelligent software. Those who invest in understanding and implementing them thoughtfully will be better positioned to build scalable, resilient, and future-ready solutions.

At Trigma, we help organizations design, build, and scale AI solutions powered by LLMs and Agentic AI frameworks. Whether you’re exploring AI adoption or looking to build intelligent systems, our technical experts ensure your AI strategy is practical, secure, and built long term value.

Ready to Accelerate Your AI Journey?

Explore how Trigma can help you build custom AI solutions, and that too with continuous support.