60-Second Summary

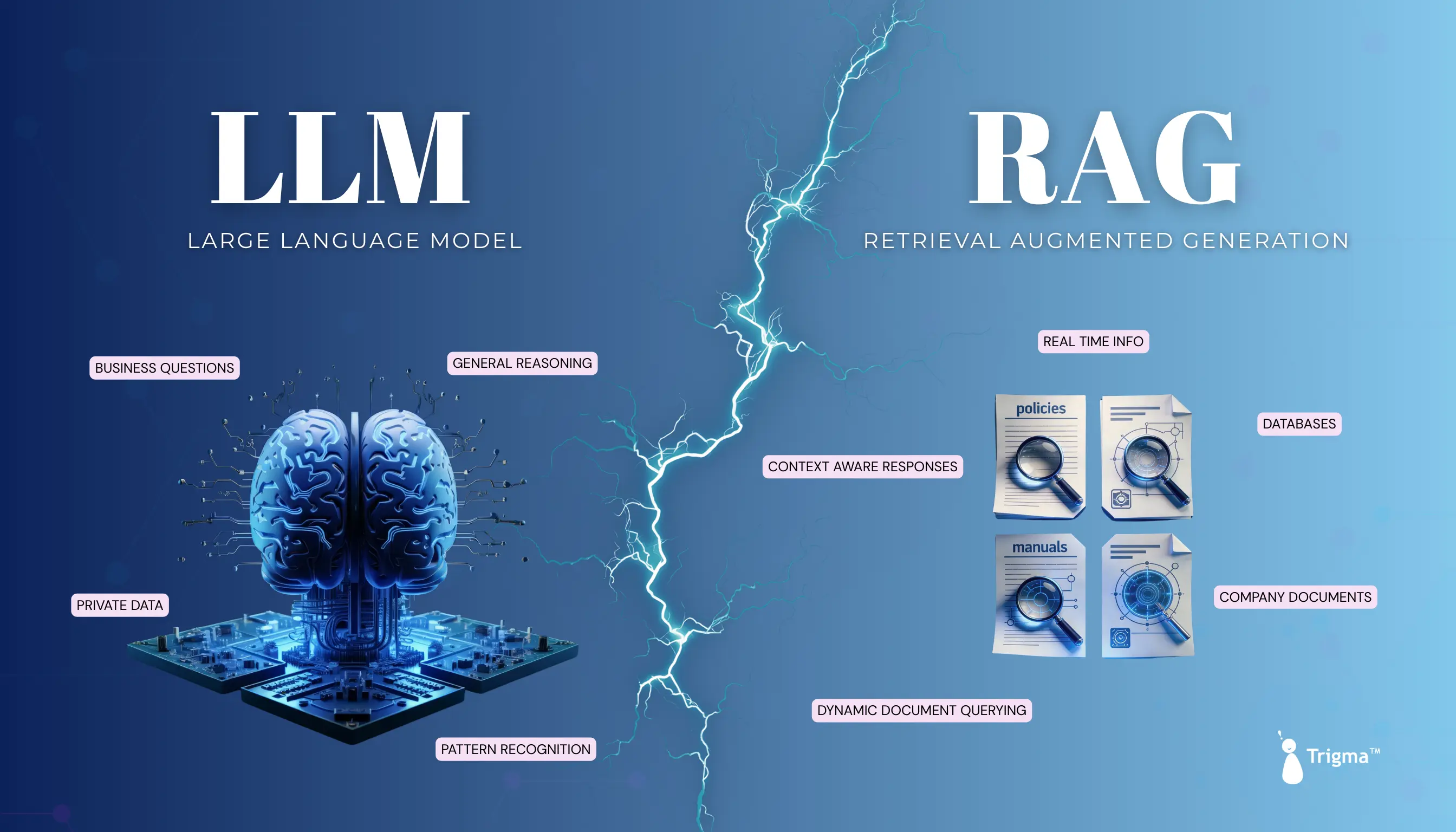

- AI products often fail when standalone LLMs can’t handle real business questions or private data.

- RAG (Retrieval-Augmented Generation) solves this by combining LLMs with company documents, databases, and real-time information.

- Unlike traditional LLMs, RAG delivers accurate, context-aware answers grounded in enterprise data.

- RAG works by indexing internal data, retrieving relevant information, augmenting queries, and generating precise responses.

- Enterprises use RAG for customer support, finance, healthcare, legal research, and internal knowledge management.

- The most scalable approach is a hybrid model: LLMs + RAG for trustworthy, production-ready AI solutions.

You shipped an AI feature that sounds smart but breaks the moment users ask real business questions.

In 2026, founders and tech leaders face a critical choice: rely on standalone LLMs or power them with Retrieval-Augmented Generation (RAG).

With enterprises demanding accuracy, fresh data, and private-context intelligence, this decision directly impacts trust and scalability.

In this post, we'll break down LLM vs RAG, explain where each shines, and help you choose the right architecture for building production-ready AI products.

A Sneak Peek of RAG

Retrieval Augmented Generation (RAG) model is a type of AI that can understand and read your company docs such as policies, manuals, and reports.

It pulls information from your proprietary systems such as Google Drive, Confluence, or Jira.

If you ask RAG questions such as "What's the refund policy for Europe?", it would give you answers that are not based on generic internet stuff; instead, it's real grounded information.

Consider RAG as your LLM + Private data = Powerful Context AI

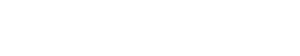

Let's say Tesla came out with their new earnings report, but using GPT-4, its knowledge cutoff was months ago, and it would have no knowledge of the quarterly report from Tesla.

But what we can do is take that report and store it in your RAG database.

The next time you ask for information about Tesla earnings, the model takes relevant info from the document and appends it to the prompt.

Here's how the model would respond to the user when it's trained using the RAG technique:

How Does Retrieval Augmented Generation Work?

Though Retrieval Augmented Generation sounds COMPLEX, it's one of the most effective ways of making your LLMs smarter.

RAG augments the capabilities of LLMs because several components work together:

| Stage | Component | What does it do? |

|---|---|---|

| Step 1 | Indexing | Your organization's internal documents (PDFs, knowledge bases, notes, wikis, codebases) will be converted into vector embeddings (numerical representations). These embeddings will be stored in the vector database. |

| Step 2 | Retrieval | The retrieval layer will search the vector database and provide the most relevant information based on what the user asks. It works by finding the semantically related chunks from the external source. Note: Consider the Retrieval layer as the SEARCH ENGINE. |

| Step 3 | Augmentation | The retrieved data and user questions are combined to provide context-specific, accurate responses because now the LLM has been provided access to the organization's extensive knowledge database. |

| Step 4 | Generation | The Large Language Model (LLM) will produce a specific response based on the augmented prompt. |

Use Cases of RAG for Enterprises

Let's discuss the applications of RAG for enterprises, which are as given below:

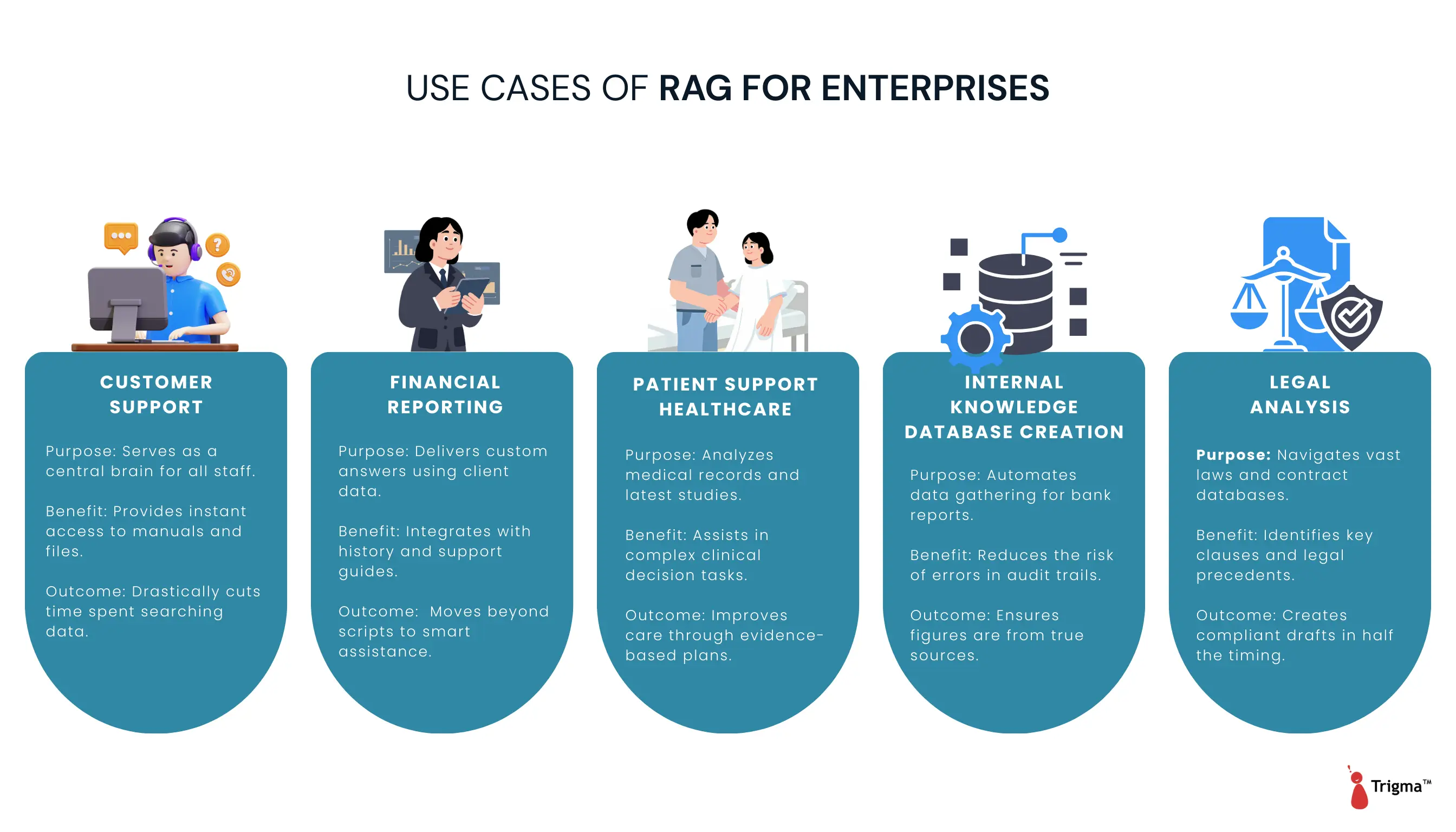

Customer Support

RAG transforms enterprises by changing their customer support game.

When virtual assistants have been given access to internal docs, ticket histories, and support guides, they can understand the context of conversations. It then provides personalized responses (grounded in customer data), not copy-paste scripts.

As your business scales and your documentation grows, these virtual assistants become SMARTER; they never forget anything and still provide relevant updates.

Financial Reporting

Financial analysts spend a lot of time collecting data from different sources to create reports, investment strategies, and risk assessments.

Compared to standard LLMs, RAGs are a better choice for doing audits and regular reports because the risk of errors will be significantly lower.

Patient Support Healthcare

Retrieval Augmented Generation stands strong in the healthcare research and diagnosis area.

Tools such as IBM Watson Health use the RAG technique to analyze huge amounts of medical data such as electronic health records for cancer diagnoses and creating personalized treatment plans.

When using IBM Watson for Oncology, RAG techniques augmented human expertise by providing accurate treatment recommendations that matched expert doctors' decisions 96% of the time.

That's how RAG-based systems not only enhance patient care but also free up healthcare professionals to focus on managing patients rather than data.

Internal Knowledge Database Creation

The RAG framework acts as an internal productivity tool for employees to access internal information. Gone are the days when they used to dig for hours to find information from reports or manuals.

Legal Document Analysis

RAG systems are helping lawyers with legal research and drafting purposes by searching through voluminous amounts of legal databases and statutes.

This way, a lawyer can draft accurate contracts that comply with legal requirements. This way, the document preparation process will be faster and error-free.

What are Large Language Models (LLMs)?

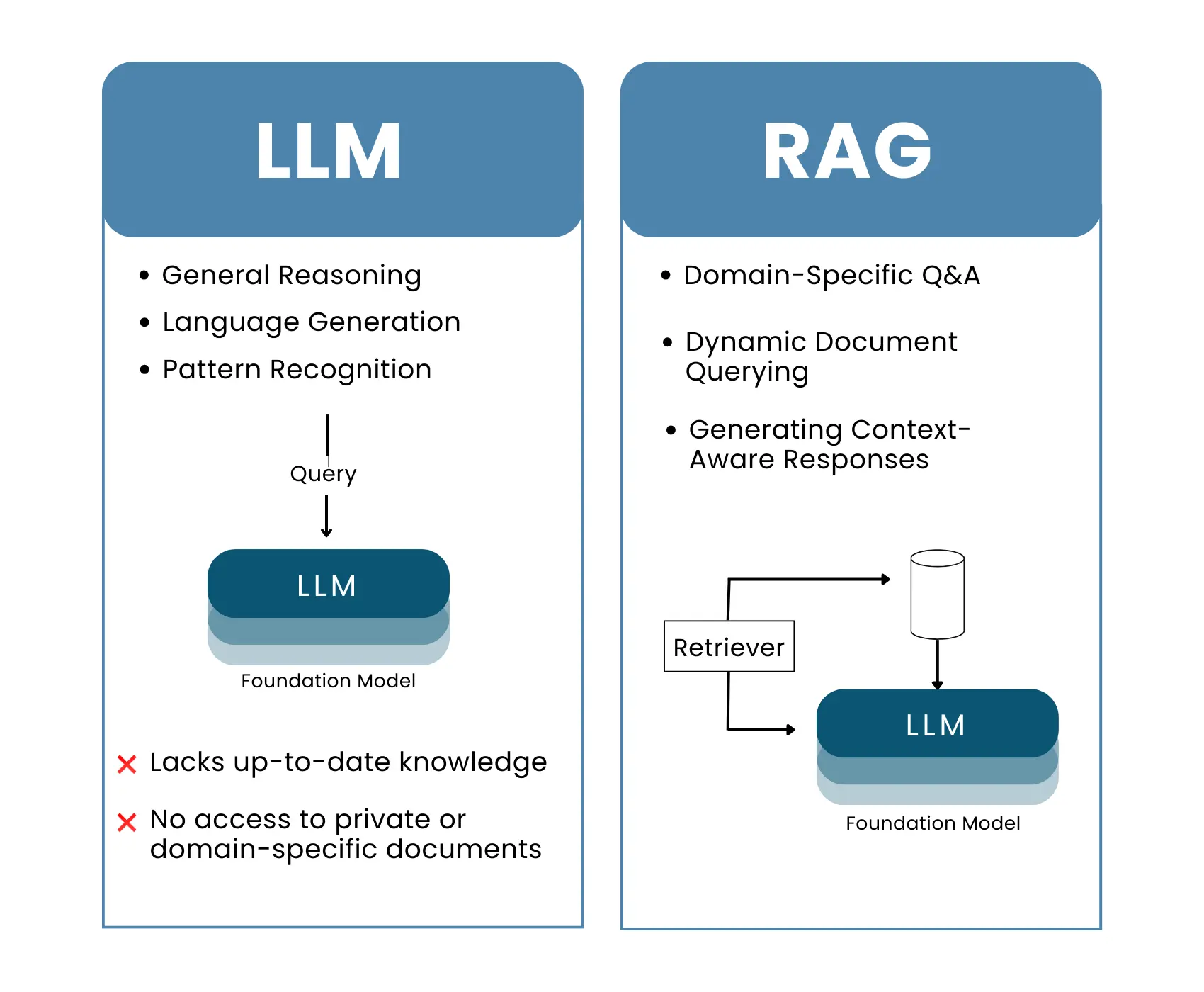

LLMs are foundations of artificial intelligence and are really good at understanding natural language, summarizing content, generating insights, and explaining concepts, but they have 2 big limitations.

- Unlike agents, they can't log in, retrieve data, and update systems.

- Large language models don't have access to internal company documents, customer support history, or brand style guides.

For companies building generative AI tools, LLMs are the main driver behind the chatbots and AI tools. But when these are fine-tuned or combined with RAG, they become more suitable for enterprise use cases.

How Large Language Models Work?

LLMs are trained on massive amounts of data, and during training, these models can recognize patterns such as syntax, context, and meaning.

Then, they're trained using deep learning techniques wherein the model learns to predict the next word to form sentences.

Key Differences between LLM and RAG

Here's the side-by-side comparison of how traditional LLMs differ from Retrieval Augmented Generation:

| Basis of Comparison | Standard LLMs | RAG LLMs |

|---|---|---|

What is it? | Traditional LLMs generate information based on what they were trained on. They don't have access to external information. | RAG adds an extra step before answering questions. As it's connected to external knowledge databases such as APIs, documents, or databases, it provides relevant information. |

What is its primary use case? | Generate responses purely based on the patterns learned during the training process. | Search external data first (databases, documents, APIs) and then generate information based on retrieved data. |

Data Usage | Use only pre-existing training data; good for creative and general tasks. | Access external data sources such as internal company documents and knowledge databases, so they're suitable for enterprise and compliance use cases. |

Flexibility in Responses | No real-time information; responses are limited to what the model already knows. | Adapt responses based on real-time or specialized information. |

Performance with Long Context | Generate content on the go, but after a certain point, they hallucinate and start giving inaccurate responses. | Handle detailed and specific text because of the retrieval mechanism. |

Specialization of Tasks | Suitable for tasks that require answering general questions, writing, and summarization. | Best for detailed, fact-based questions. |

External Dependency | Work independently, no reliance on external data sources. | Dependent on external data sources for better answers. |

Application | Ideal for content creation, creative writing, and NLP tasks. | Good for areas where accuracy is pivotal, such as legal research, healthcare, medical queries, customer support, etc. |

RAG vs LLM: Which Is the Right Architecture Model for Your Business?

Selecting between RAG and LLM can be a tough choice, especially when the adoption of Gen AI is growing.

When to Use RAG?

RAG is generally good for knowledge-intensive tasks. Use it when you need accurate answers from private or specialized data, and it's good for enterprise Q&A, document search, and chatbots.

Retrieval Augmented Generation technique is useful for areas like:

- Where information changes quickly, such as in finance or regulatory compliance. This means RAG can pull data from updated sources in real time.

- RAG can enable virtual assistants to provide personalized responses based on customer data and their history.

- Sectors such as healthcare and legal, which require deep domain data.

In healthcare, when it can retrieve data from the latest medical research and medical history, it can help clinicians make better clinical decisions.

While in the case of legal settings, it helps legal professionals analyze huge volumes of contracts and make better legal decisions.

When to Use a Large Language Model?

Use LLMs when you:

- Perform tasks that rely on general knowledge

- Brainstorm marketing copy

- Summarize lengthy reports or meetings

But most organizations don't look for "either/or"; they adopt a hybrid approach. This means they combine the capabilities of RAG with LLM to create:

- Smarter and context-aware applications

- Generative models for natural answers

- Responses that remain accurate using retrieved data

How Can Trigma Help You with Data-Driven RAG and LLM Solutions?

At Trigma, we know how to solve complex business challenges using advanced LLMs.

From creating agents that enhance customer experiences to generating tools for content creation and marketing, we know how these intelligent systems can help you generate eye-catching images and content.

A client approached Trigma for creating a mental health AI chatbot.

They wanted to engage their customers and needed an AI/ML solution that could identify stress levels and then recommend podcasts, blogs, or medical advice to help cope with mental stress.

Result - The AI-powered mental health chatbot delivered measurable improvements in user engagement and support efficiency. Approximately 30% higher engagement was observed as users completed guided conversations, while 30–40% of routine queries were handled autonomously. Continuous learning from user interactions helped improve response relevance and reduced the operational load on support teams.

Need help in developing RAG or an LLM for your business?

FAQs

Which approach works best for customer service applications?

RAG outperforms traditional LLMs as it pulls relevant information from multiple data sources in real time.

LLMs, though good for conversational responses, struggle to provide accurate answers without real-time data access or external databases.

Can LLMs access external knowledge the way RAG does?

LLMs can produce human-like text, but they don't integrate well with external knowledge databases. RAG, on the other hand, retrieves real-time information from external systems, making the outputs more context-driven.

Are RAG systems more expensive to run than LLMs?

RAG models are hugely resource-intensive, and they require more computational resources as they retrieve relevant data first and then generate a response.

Which industries mostly benefit from RAG and LLMs?

RAG is suited for industries that require accurate and up-to-date information, such as healthcare, finance, and legal services.

LLMs are extensively used for tasks that require content creation, summarization, and translation in the areas of marketing, media, and education.