Ever wondered how these AI models can deliver confident, accurate answers when the information they need is constantly changing?

As Large Language Models (LLMs) continue to advance, their ability to deliver human-like responses has grown, but reliability remains a key concern. LLMs struggle to stay current, verify facts, and adapt to specialized business knowledge. This is where Retrieval Augmented Generation (RAG) is transforming the way intelligent systems operate in real-world scenarios.

Rather than relying on pre-trained knowledge, RAG enables AI to retrieve relevant & trusted information at the moment. RAG enables companies to integrate their data with LLMs, allowing for more trustworthy and relevant AI opportunities. As companies increasingly adopt AI for decision-making support, customer interactions, and internal knowledge access, RAG is emerging as a critical foundation for building systems that businesses can trust.

In this blog, we’ll explore RAG in AI, how it works, how it is different from semantic search, and why organizations are heavily investing in RAG architectures.

What is RAG in AI?

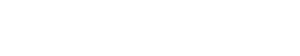

RAG is an AI architecture that combines the strengths of traditional information retrieval systems with the capabilities of generative large language models (LLMs). Instead of relying on predefined data like traditional language models, RAG fetches relevant documents from an internal knowledge source before generating an answer.

This architecture combines two powerful capabilities

- Information retrieval from external knowledge sources

- Natural language generation using LLMs (Large Language Models)

Instead of relying solely on the LLM database, RAG retrieves information from relevant external sources and adds that information to the model before generating a response.

RAG and Large Language Models (LLMs)

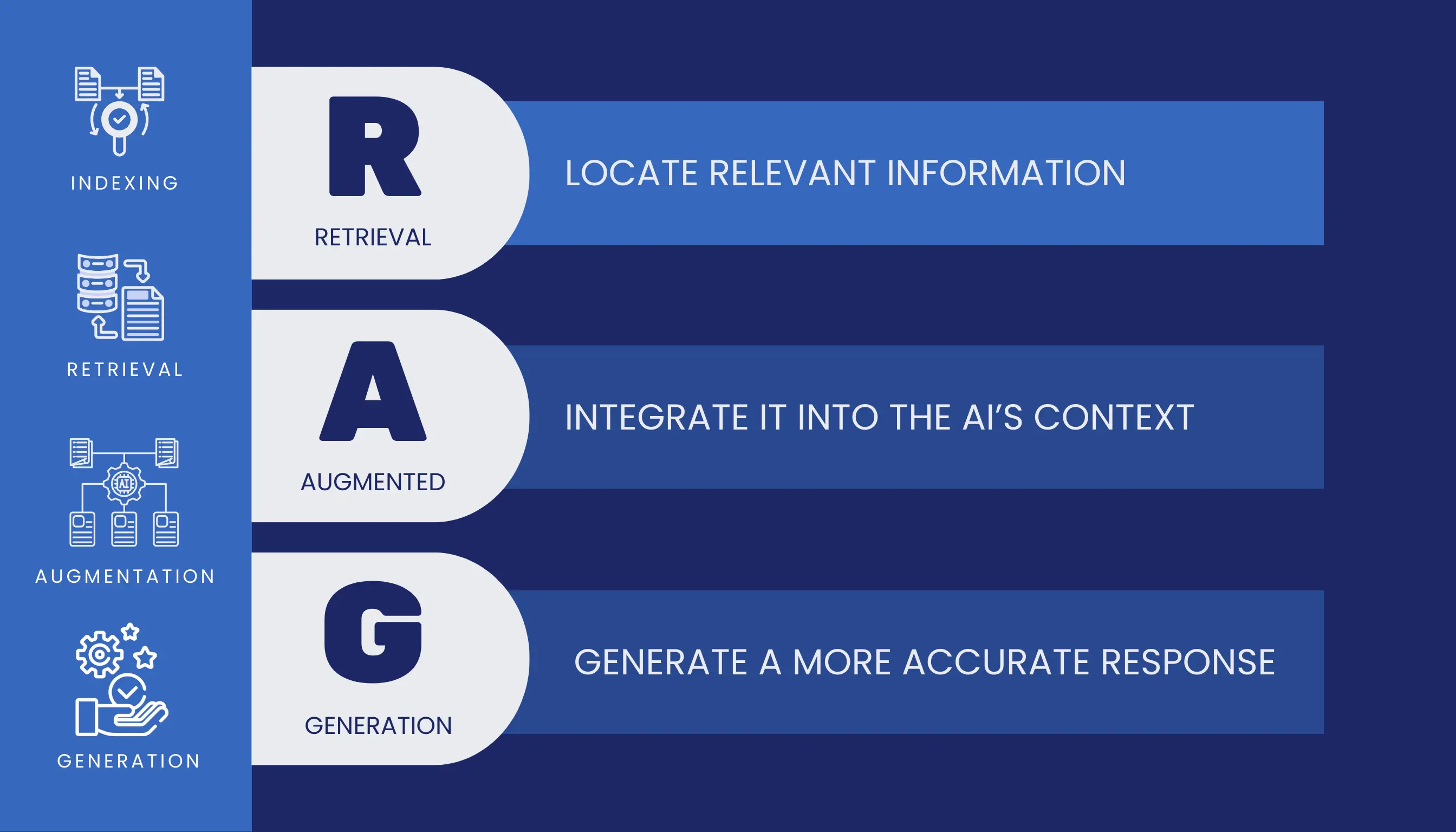

Large Language Models (LLMs) are designed to generate responses by learning patterns from massive volumes of training data. While this enables fluent and contextually aware language generation, LLM still operates with static knowledge, i.e., they can’t adapt to access real-time data and new updated content.

In this scenario, RAG helps LLMs overcome their knowledge limits by allowing them to fetch the right information from external sources before answering. The combination of RAG and LLMs allows enterprises to move beyond generic AI outputs and toward systems that can reason over specific domain data, comply with internal policies, and adapt as information evolves.

This architecture not only improves response accuracy but also reduce hallucinations that are considered to be the most common challenge in LLM deployments. This relationship (LLM & RAG) allows organizations to deploy AI systems that are context-aware, continuously updatable, and safer and more explainable.

To understand how this approach differs from traditional models, see a detailed comparison of LLMs vs RAG.

Working of RAG (Retrieval Augmentation Generation)

RAG works by combining information retrieval with text generation to produce accurate and context-aware responses. Instead of relying on LLMs, RAG allows the systems to retrieve relevant information first and then respond and generate. Here’s how it actually worked:

1. User query

When a user puts a query to the LLM, the system first understands the intent behind the query. The input is converted into a numerical representation called an embedding that captures the semantic meaning of the query.

2. Information retrieval

The embedding is then used to search a vector database containing pre-indexed documents, knowledge bases, and enterprise data. Through the search, the system identifies the most relevant pieces of data based on the query.

3. Context

The retrieved content is passed to LLM models, and this step ensures that the model has access to accurate, up-to-date information before generating a response, including forming an answer as per the user’s intent and query.

4. Response generation

Finally, the LLM generates a natural language-based answer using both the user’s query and retrieval context. The response is based on real data, research, not outdated and predefined data.

Type of RAG Architectures

Below are the common RAG architecture types.

Vector-based RAG

Vector-based RAG works with a specialized vector database that stores information as numerical embeddings. This format allows AI systems to understand semantic meaning and retrieve the most relevant context efficiently.

Knowledge graph-based RAG

In this, the information is organized into nodes and representations. By leveraging knowledge graphs, RAG systems can identify meaningful connections between entities, enabling more structured reasoning and human-like understanding.

Ensemble RAG

Ensemble RAG runs multiple retrievers in parallel and combines their outputs. This approach improves reliability by allowing different retrieval methods to complement each other and cross-check results.

Agentic RAG

Agentic RAG introduces autonomous decision-making into the retrieval process. Instead of relying on a single retrieval step, the systems can plan, iterate, and decide what information to fetch and when. This enables deeper reasoning, better context refinement, and more reliable responses for complex queries.

Major Reasons Why Organizations are Heavily Investing in RAG Architectures

- Improved accuracy and trust by grounding AI responses in real data

- Reduced hallucinations as compared to LLMs

- Access to real-time information without retrieving data

- Faster deployment and lower costs than fine-tuning large models

- Scalable architectures that grow with enterprise data

- Better explainability and compliance for regulated industries

- Future-ready foundation for production-grade applications

RAG v/s Semantic Search

| Basis | Semantic Search | RAG |

|---|---|---|

| Primary purpose | Find the most relevant document or content | Retrieves information and generate a complete information |

| Output | List of documents, lists, or passages | Natural language responses grounded in retrieved data |

| Role of LLMs | Optional or limited | Core component of the system |

| Knowledge usage | Retrieves existing content only | Directly answers complex queries |

| Hallucination risk | No hallucination | Reduced hallucination due to grounded retrieval |

| Use of vector search | Yes | Yes |

| User experience | User reads and interprets results | AI provides a ready-to-use answer |

Semantic search helps users find information, while RAG helps users to understand information by turning retrieved data into accurate conversational responses.

Looking to implement RAG in your business?

Whether you’re exploring customer support automation, AI-driven knowledge systems, the RAG architecture can unlock measurable impact. Partner with Trigma, having experienced AI teams to design, build, and deploy RAG solutions tailored to your data and business growth.